Newcomb’s problem is one of the most famous problems in the branch of philosophy, probability, and mathematics known as decision theory. What’s even more interesting, in my opinion, is that contemporary philosophers are nearly split down the middle on the answer. Here is the paradox, taken from the Stanford Encyclopedia of Philosophy:

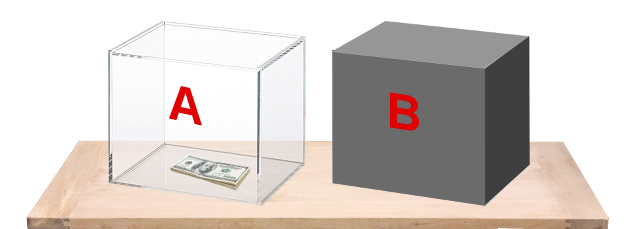

“An agent may choose either to take an opaque box or to take both the opaque box and a transparent box. The transparent box contains one thousand dollars that the agent plainly sees. The opaque box contains either nothing or one million dollars, depending on a prediction already made. The prediction was about the agent’s choice. If the prediction was that the agent will take both boxes, then the opaque box is empty. On the other hand, if the prediction was that the agent will take just the opaque box, then the opaque box contains a million dollars. The prediction is reliable. The agent knows all these features of his decision problem.”

There are two solutions here: either “one-box,” as I’ll call it, or “two-box.” There is also no commonly agreed-on “correct” answer: neither solution stems from a logical fallacy or a mistake in reasoning. The two answers stem from a fundamental disagreement, primarily regarding the prediction. Usually, it is implied the prediction is made by either a supercomputer, an all-knowing alien, or some other being with extreme intelligence. Exactly what it is does not matter. The point is that this entity is reliable the vast majority of the time.

One-boxing. One-boxers will argue that, in the past, this reliable predictor has nearly always been correct. It is able to know if you were going to take two boxes, and if so, the opaque box will be empty. The argument rests on the fact that this was the case in the past, and others in the past likely attempted to take both boxes only to come up with one thousand dollars. If this is true, then one should not believe themselves to be the exception to this rule. Doing so would be special pleading—believing yourself to be exempt from something that has undeniably applied to others in the past. Therefore, in order to make the most money, one should pick only the opaque box and walk away with $999,000 more than they would have otherwise.

Two-boxing. Two-boxers will base their argument on the belief that the predictor has already made a decision about placing the $1 million in the opaque box before you act. Therefore, if that decision is already made, nothing about your current actions can affect the situation. In other words, the predictor has scanned your body and then left the room. Anything that you do now is outside of the predictor’s jurisdiction; it cannot change its belief. If this is true, then you should take the clear box in addition to the opaque one. There may or may not be money in the opaque box, but taking the clear box won’t influence that decision. So it would be foolish to leave an extra $1,000 on the table.

My immediate response was that one-boxing was the way to go. After I read both sides of the argument, I understand the other side. However, now I think that the problem is not just a problem but a paradox—unanswerable. I think a lot of the disagreement in this situation relies on the predictor. This is probably why the “predictor” was purposely left vague in the Stanford Encyclopedia description of the problem. However, two things can be known for sure: that the predictor is reliable, and that the prediction was already made. Let’s talk about what the second clause means first.

The fact that the prediction was already made means that the predictor scans more than just your current intentions. The predictor can scan your upbringing, your long-term goals, whom you provide for, etc.—everything. With this, and the fact that it is assured to be reliable, it’s not a far reach to say that the predictor should be able to develop a good picture of who you are based on those things.

I’m sure you can see where this is going. Now we have basically gotten ourselves into what appears to be a free will vs. determinism debate. Are you determined to pick one or two boxes, and can the predictor reliably sense this? Or can you go outside of the past actions, nurture, and nature that have made you who you are and pick something else? Before you answer—it’s a trick question. Even if we did somehow have free will, I don’t believe there is a strong argument for making a decision that is 100 percent outside of your “determined” nature. When philosophers talk about free will, they usually mean the ability to “have done otherwise,” not an “action completely outside your personality, ability, or character.” The latter seems impossible.

We also must be careful not to inject retrocausality into the situation. To the best of my understanding, an effect cannot precede its cause. Maybe on the quantum level it can, but even if it does, I don’t think that has anything to do with this problem.

Therefore, even if one has the most libertarian version of FW, the predictor makes his decision before you make your decision. Thus, your decision doesn’t influence the situation, and pretending that it would be invoking retrocausality—which creates a very weak argument.

To correctly utilize the two-box strategy, one would have to pick two boxes without ever having been someone who wants to pick two boxes beforehand. If you were the latter, then the predictor would have already sensed that, and it would be impossible for you to dupe it. But this solution works both ways. For you to correctly utilize the one-box strategy, you would also have to be a person who picks one box without ever being someone who would two-box if given the chance. Both of them rely on an instantaneous, free-will-esque decision that should not be relied upon for certainty.

Newcomb’s problem is a paradox because it relies on the chooser and models the game around their decisions. Therefore, it is impossible to prescribe a rule for it, because the predictor’s decision relies on the subjective character of the player. If it senses that you are someone who will take both boxes, the opaque box will be without the $1 million. If it senses that you will take only one, the $1 million will be present. Either way, it’s foolish to rely on outsmarting the predictor or to rely on the idea that you’ll make some split-second decision that is not based on the character the predictor has already perceived.

So, my solution would be to stick with your intuition. It’s ironic. After all of this reasoning, I find myself back at the start of my thinking. My first thought was to one-box. The predictor would reliably sense this. I should proceed to one-box. While two-boxing would be a flashier option for me, it just does not make sense that I wouldn’t think about two-boxing before making my decision. If I did, then the predictor would sense this, and I would probably end up with only $1,000. However, if you are already thinking about two boxes beforehand, then you should still take two boxes as well. It wouldn’t make sense to leave the $1,000 on the table, and the predictor is not 100 percent—so you might still be able to get the $1 million.